Long-Running PHP in Production: WebSocket Servers That Never Sleep

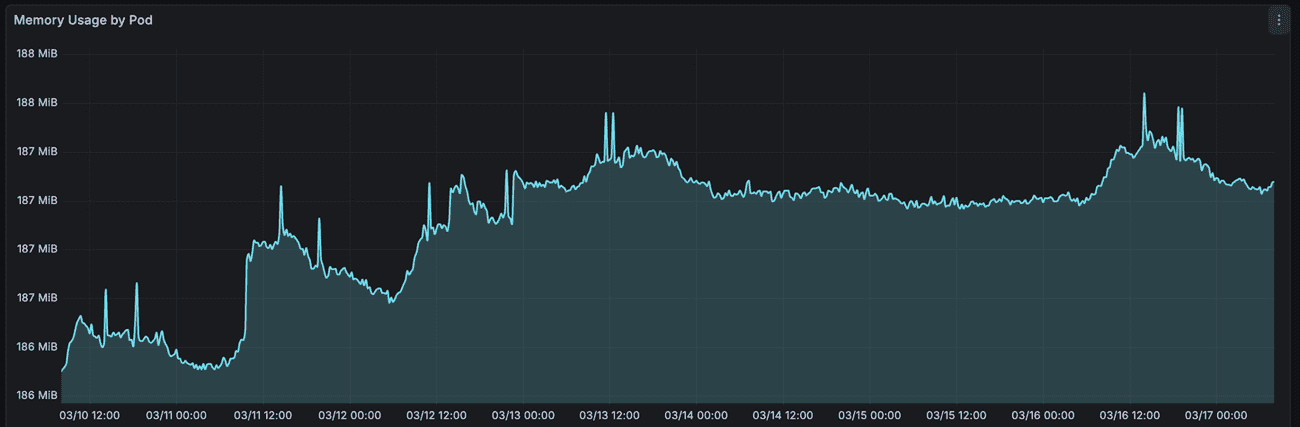

We've been running a real-time event delivery server in PHP for six years. It handles thousands of WebSocket connections a day, and its memory usage looks like a flat line.

In 2019, when we implemented it, the go-to solution was a NodeJS server with Socket.IO. But after some discussion and considering our infrastructure limitations, we decided to give ReactPHP a try.

If you're wondering whether PHP can do this, here's exactly how it works.

The Challenge

Requirement: when something changes in the data: a deal moves to the next stage, a note gets added to a client record, a task is assigned to an account manager - other users looking at the same data should see it immediately. No refresh, no polling.

It was a system we did not build, and we took over development after it already had a large user base. The updates were implemented using polling. The UI requested updates every 5 seconds. The issue was that the query to fetch them was a bit heavy, and there was no way to optimize it.

The Solution

We already made a couple of changes to the system to add more integrations and features. Our key concept was to implement an event-driven architecture. This allowed us to avoid rewriting everything while still adding new integrations. This approach also allowed us to add WebSocket real-time updates easily.

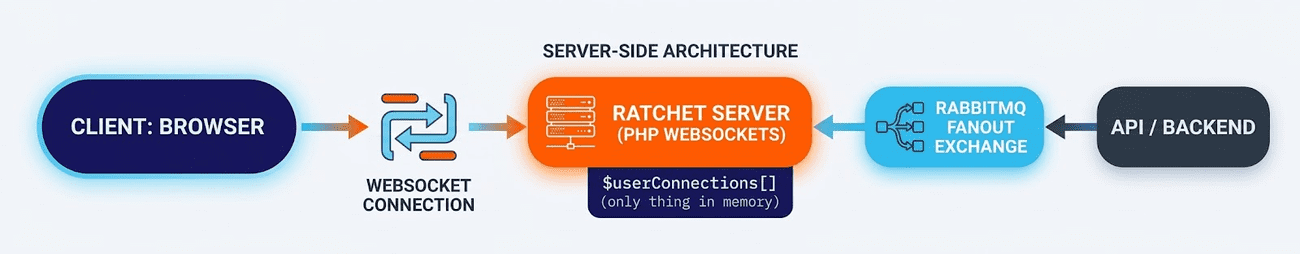

The backend publishes an event to RabbitMQ. The WebSocket server receives it and delivers it to the appropriate connected users in real time.

The flow is simple:

One process. Persistent TCP connections on one side, a message queue on the other. Events flow through.

By "right user", we mean two things: first, because this is a multi-tenant system, we need to ship updates isolated by tenant; and second, because of the nature of the system, all tenants share the same infrastructure. In addition to tenant isolation, we also need to ship updates only about what the user is viewing. So we need to support "topics".

The entry point: a Symfony command that never returns

There's no custom process manager, no separate daemon framework. The server is a plain Symfony console command with a load balancer in front of it.

It takes ClientFactory (wraps the RabbitMQ connection), UserProvider and UserRightsProvider (the same classes the HTTP layer uses — no duplication, no second auth system), and EventRepository as constructor dependencies.

It's wired up in services.yml like anything else — autowire: true, autoconfigure: true, #[AsCommand] handles the tag.

The only thing unusual about the execute() method is its last line:

$this->loop->run(); // Never returnsEverything else — timers, signal handlers, the WebSocket server, the RabbitMQ consumer — gets registered before that call. Then the event loop takes over.

The event loop

ReactPHP's event loop is single-threaded, non-blocking I/O. It doesn't spawn threads. It doesn't sleep(). It watches file descriptors and fires callbacks when they're ready — a new TCP connection, an incoming WebSocket message, a message from RabbitMQ. While it's waiting for I/O, nothing burns CPU.

Here's the full execute() method. Read it top to bottom and notice what's happening: everything is just registering callbacks. Nothing actually runs until the last line:

protected function execute(InputInterface $input, OutputInterface $output): int

{

$this->loop = Loop::get();

$this->stream = new Stream($this->userProvider, $this->userRightsProvider, $this->eventRepository);

// Connect to RabbitMQ and start consuming (async, non-blocking)

$this->setupQueue();

// Bind the WebSocket server to the event loop

$socket = new SocketServer("0.0.0.0:8080", [], $this->loop);

new IoServer(new HttpServer(new WsServer($this->stream)), $socket);

// Ping all connections — keeps Cloudflare from closing idle sockets

$this->loop->addPeriodicTimer(60, fn() => $this->stream->sendPingMessageToAll());

// Graceful shutdown on pod termination

if (defined('SIGINT')) {

$this->loop->addSignal(SIGINT, function () {

$this->stream->disconnectAll();

$this->loop->stop();

});

}

// This blocks forever. Everything above just registered callbacks.

$this->loop->run();

return Command::SUCCESS;

}Two things worth calling out:

60 seconds — ping. Cloudflare (and most load balancers) will kill idle WebSocket connections after 100 seconds of silence. The ping resets that clock. It also does something useful — more on that below.

SIGINT — graceful shutdown. When a pod is terminated, we get a signal first. We close all connections cleanly before exiting, rather than letting clients discover the server is gone on their next message.

Stream - this is where we implement the websocket behavior:

Stream - The WebSocket layer

SocketServer is ReactPHP — it handles raw TCP. IoServer, HttpServer, WsServer are Ratchet — they handle the HTTP upgrade handshake and the WebSocket protocol framing. Our code only ever sees $this->stream.

Stream implements Ratchet's MessageComponentInterface, which means four methods:

onOpen

TCP connection is open. We send an acknowledgement. The connection is not yet authenticated — we don't store it yet.

onMessage

This is where authentication happens. The client's first message must be a JSON payload with an access token:

public function onMessage(ConnectionInterface $from, $msg): void

{

$messageData = json_decode(trim($msg), true);

$userToken = $messageData['access_token'] ?? null;

if (!$userToken) {

$from->send(json_encode(['status' => 'error', 'error' => 'access_token not present']));

return;

}

$user = $this->userProvider->findUserByWebsocketAccessToken($userToken);

if (!$user) {

$from->send(json_encode(['status' => 'error', 'error' => 'access denied']));

return;

}

// Store the connection in the map: user -> connection

$this->userConnections[$user->getId()][] = $from;

$from->send(json_encode([

'status' => 'ok',

'connected' => true,

'message' => 'Connected as user ' . $user->getId(),

]));

}WebSocket access tokens are generated by the backend when the user session is started. findUserByWebsocketAccessToken matches the token to a user record.

onClose

The connection is removed from the map. If that was the user's last connection, the user key is removed too. unset is explicit - PHP's reference counting cleans up immediately. Forgetting about the unset might cause a memory leak.

onError

Log the exception, close the connection. The connection map is cleaned up by onClose, which Ratchet calls automatically after onError. No state lingers.

The connection map — and why memory is flat

The entire state of the server lives in one property:

private array $userConnections = []; // array<int, array<ConnectionInterface>>Keys are user IDs. Values are arrays of ConnectionInterface objects — one per open browser tab. That's it. No message history, no queues, no caches.

Memory grows when users connect. Memory shrinks when they disconnect. The unset in onClose makes sure of that. What you see in the 24-hour graph isn't ReactPHP being clever — it's PHP's garbage collector doing its job because we gave it nothing to hold onto.

RabbitMQ: async AMQP on the same event loop

The RabbitMQ client is Bunny's async variant, and it integrates directly with the ReactPHP loop. Setup is a promise chain. If you're used to async/await or fibers, the .then() style takes a minute to read — it's promises, same idea, different syntax:

$this->client->connect()

->then(fn(AsyncClient $client) => $client->channel())

->then(function (Channel $channel) use ($rabbitConfig, $queueName) {

return all([

$channel,

$channel->queueDeclare($queueName, autoDelete: true),

$channel->exchangeDeclare($rabbitConfig->getExchangeName(), AMQPExchangeType::FANOUT),

$channel->queueBind($queueName, $rabbitConfig->getExchangeName()),

]);

})

->then(function ([$channel]) use ($queueName) {

return $channel->consume([$this, 'processMessage'], $queueName, noAck: true);

}, function (\Exception $e) {

$this->logger->error('RABBIT', ['message' => $e->getMessage()]);

$this->stream->disconnectAll();

$this->loop->stop();

exit(1);

});The error handler calls exit(1) — in Kubernetes this triggers an immediate pod restart, and because the queue is declared with autoDelete: true, it gets recreated fresh on reconnect. Failure recovery is just a restart; there's nothing to clean up manually.

A few things worth calling out:

autoDelete: true on the queue means it disappears when the consumer disconnects. No stale queues piling up in RabbitMQ when pods restart. With multiple pods running this server, each pod gets its own queue — all bound to the same exchange. This is mostly required for releasing new versions of the app.

The exchange is a FANOUT. Every pod receives every event message. Each pod then delivers only to the users connected to it. Routing is local, not at the broker level.

noAck: true means messages are auto-acknowledged as soon as they're delivered — no manual confirmation needed. For live event delivery, this is the right call: if a message is lost, retrying a stale "deal stage updated" notification to a user who may have already refreshed makes no sense. The overhead of manual ack buys nothing here.

all() is React's parallel promise combinator — queue declare, exchange declare and bind happen concurrently rather than sequentially.

Once consume() resolves, RabbitMQ will push messages to processMessage as they arrive. The event loop handles the delivery — no polling, no blocking wait.

public function processMessage(Message $msg): void

{

$body = json_decode($msg->content, true);

if ($body['send_to_all'] ?? false) {

$this->stream->sendMessageToAllConnectedUsers($body['data']);

return;

}

$this->stream->sendNewMessage($body['data']);

}The send_to_all flag is for broadcast messages — notifying all connected users about a new app version, a maintenance window, or any system-level event.

sendNewMessage handles routing to the right connections:

public function sendNewMessage(array $data): void

{

$connections = $this->getUserConnections($data);

foreach ($connections as $connection) {

$connection->send(json_encode([

'status' => 'ok',

'data' => $data,

]));

}

}The ping doubles as a sync mechanism

Every 60 seconds, sendPingMessageToAll sends a message to every connected client:

public function sendPingMessageToAll(): void

{

foreach ($this->getAllUniqueConnections() as $connection) {

$connection->send(json_encode([

'status' => 'ok',

'type' => 'system',

'connected' => true,

'message' => 'events ping',

'lastEventId' => $this->eventRepository->getLastEventId(),

]));

}

}The lastEventId is the ID of the most recent event record in the database. The client compares this against what it last received. If there's a gap — because the user was briefly disconnected, or a message was missed — the frontend can fetch the delta over HTTP. The ping is a keepalive and an eventually-consistent sync checkpoint in one message.

Beware of hidden memory leaks: Long-running processes and Monolog

There's one gotcha that isn't obvious until you've hit it: Monolog memory growth.

In a normal HTTP request, the PHP process dies at the end and takes everything with it — including any log records buffered in memory. Handlers like FingersCrossedHandler are designed around this assumption: buffer everything, flush when a trigger condition fires, then die. In a long-running process, the dying never happens. If nothing ever triggers a flush, the buffer just grows.

The fix is a periodic reset():

if ($this->logger instanceof ResettableInterface) {

$this->loop->addPeriodicTimer(300, fn() => $this->logger->reset());

}ResettableInterface has been in Monolog since version 1.24. Calling reset() clears all handler buffers and resets processors. Five minutes is conservative — tune it to your log volume. Without it, a server that logs heavily will slowly climb in memory with no obvious cause.

Production limits and when to look elsewhere

This is an event loop — a single thread. Everything running inside it must be fast and non-blocking. Our message routing does make one synchronous database call to resolve which users have access to a given record, which technically blocks the loop for the duration of that query. At our current message volume — a few hundred events per minute — that query completes in under a millisecond and the impact is imperceptible. If you're processing hundreds of events per second, that adds up fast and the loop will start to lag. The fix is either caching access rules in memory or switching to an async database driver; ReactPHP itself isn't the ceiling, the synchronous operations you put inside it are.

On tooling: when we built this in 2019, ReactPHP + Ratchet was the only credible production-ready option for async WebSockets in PHP. The ecosystem has since caught up — Swoole/OpenSwoole matured, PHP 8.1 brought fibers which enabled Amp v3's cleaner API, and RoadRunner now offers WebSocket support too. If you need true multi-threading or want coroutine-style code without promise chains, Swoole is worth a serious look. FrankenPHP is an excellent modern app server, but it does not support WebSockets — you would still need a separate process for that. But the core idea is identical across all of them: one event loop, non-blocking I/O, PHP running indefinitely. The pattern is the point.

Another thing a trained eye will notice is that the scenario described is perfect for Server Sent Events (SSE). SSE is a great fit for real-time updates, where you do not need two-way communication. Yes, it's a better solution now, but in 2019 it wasn't.

The result

Six years. Thousands of connections a day. No memory leaks. No crashes.

PHP processes can run for days/weeks/months. ResettableInterface handles Monolog buffers. unset handles the connection map. ReactPHP handles the I/O. Ratchet handles the protocol. Bunny handles the broker. Symfony handles everything else.

The "PHP can't do this" take usually comes from people who stopped looking at the ecosystem around the time mysql_* was deprecated. The libraries are mature, the patterns are established, and the code is — as you can see — not particularly exotic.

If you have a PHP team, a PHP codebase, and a need for real-time delivery, you don't need to reach for a second runtime. Keep in mind that we did this in 2019; now, in 2026, you have even more options and more flexibility.

This is the first post in a series on async PHP. The next post tackles even more async possibilities in PHP, considering what is available now and what was not available in 2019.